Benefits of Using SignalPilot Governed MCP to Build dbt Models

Luiz Fernando

The Status Quo is Broken

Here's how most people build dbt models today:

- Open a SQL client (DBeaver, pgAdmin, DataGrip)

- Manually explore tables:

\d table_name,SELECT * FROM ... LIMIT 10 - Switch to editor, write the model

- Switch to terminal, run

dbt run - Switch back to SQL client, check results

- Repeat

Four context switches per iteration. And if you're using an AI assistant? You're copy-pasting schemas and query results between windows.

What Changes with MCP

MCP (Model Context Protocol) lets AI assistants call tools directly — including database tools. SignalPilot's MCP server exposes governed database access:

list_tables → Full schema with columns, PKs, FKs, row counts

describe_table → Column-level detail with types and annotations

query_database → Read-only SQL with automatic governance

schema_overview → Database-level statistics

The AI assistant gets direct, structured access to your database. No copy-paste. No context switching.

But Direct Database Access is Terrifying

This is where governance matters. SignalPilot doesn't just proxy SQL — it enforces safety:

1. AST-Level Query Parsing

Every SQL query is parsed to an Abstract Syntax Tree before execution. DDL (CREATE, DROP, ALTER) and DML (INSERT, UPDATE, DELETE) are blocked at the parse level — not with regex, but with actual SQL grammar analysis.

2. Automatic LIMIT Injection

No query runs without a row limit. If you write SELECT * FROM gateway_health_events (31K rows), the governance layer injects LIMIT 1000. The AI gets enough data to understand patterns without accidentally pulling gigabytes.

3. Statement Stacking Detection

SELECT 1; DROP TABLE users; — blocked. The parser detects multiple statements and rejects the query before it reaches the database.

4. Full Audit Trail

Every query is logged with:

- Who ran it (user ID)

- Which tables were accessed

- How many rows returned

- Cost in USD

- Whether it was blocked (and why)

5. Cost Governance

Session budgets cap total spend. An AI agent can't run runaway queries — the budget system tracks cumulative cost and blocks when limits are hit.

How This Played Out Building dbt Models

Schema Discovery: One Call, Full Picture

SignalPilot MCP: list_tables("SignalPilot-Ops")

→ public.gateway_orgs (12 rows): org_id*, byok_enabled, created_at

→ public.gateway_audit_logs (4K rows): id*, user_id, timestamp, event_type, ...

→ public.gateway_health_events (31K rows): id*, org_id, connection_name, ...

... (10 tables total)

The AI had the full schema in one structured response. No iterating through information_schema. No manual exploration.

Data Profiling: Governed Exploration

The AI ran exploratory queries to understand data patterns:

-- Discover health tiers

SELECT org_id, connection_name,

ROUND(AVG(CASE WHEN success THEN 1.0 ELSE 0.0 END) * 100, 1) as uptime_pct

FROM gateway_health_events GROUP BY 1, 2 ORDER BY uptime_pct ASC

Each query was:

- Automatically limited to 1000 rows

- Logged to the audit trail

- Tracked for cost

- Read-only enforced

The AI discovered 5 natural health tiers, 3 AI agent types, industry-specific compliance patterns, and budget spending patterns — all through governed queries.

Type Discovery: Catching Data Quirks

One query revealed that gateway_api_keys.created_at stores epoch timestamps as VARCHAR, not DOUBLE PRECISION. The AI's first model failed:

function to_timestamp(character varying) does not exist

It ran a follow-up query through SignalPilot to check the actual type:

SELECT created_at, pg_typeof(created_at) FROM gateway_api_keys LIMIT 3

→ '1735689600' | character varying

Then fixed the model: to_timestamp(created_at::double precision). The governed query tool was the debugging tool.

Validation: Query the Output

After dbt run, the AI queried the marts through SignalPilot to validate:

SELECT org_id, security_score, pii_masking_mode, byok_enabled

FROM public_marts.security_posture

The same governance applies to model outputs. Read-only, audited, limited.

The Compound Benefits

For Individual Developers

- Zero context switching — schema discovery, profiling, model building, validation all in one flow

- Faster iteration — AI writes the model AND validates it, catching type mismatches immediately

- Built-in safety — explore production-like databases without fear

For Teams

- Audit trail — every query the AI ran is logged. You can review what it explored, what it cost

- Agent attribution — track which AI tool (claude-code, cursor, dbt-agent) generated which queries

- Cost governance — budget caps prevent runaway exploration. Our 12-org project cost fractions of a cent

For Compliance

- Read-only by default — no model building process can accidentally modify source data

- PII masking — sensitive columns can be masked before the AI sees them

- BYOK encryption — credentials never leave your infrastructure

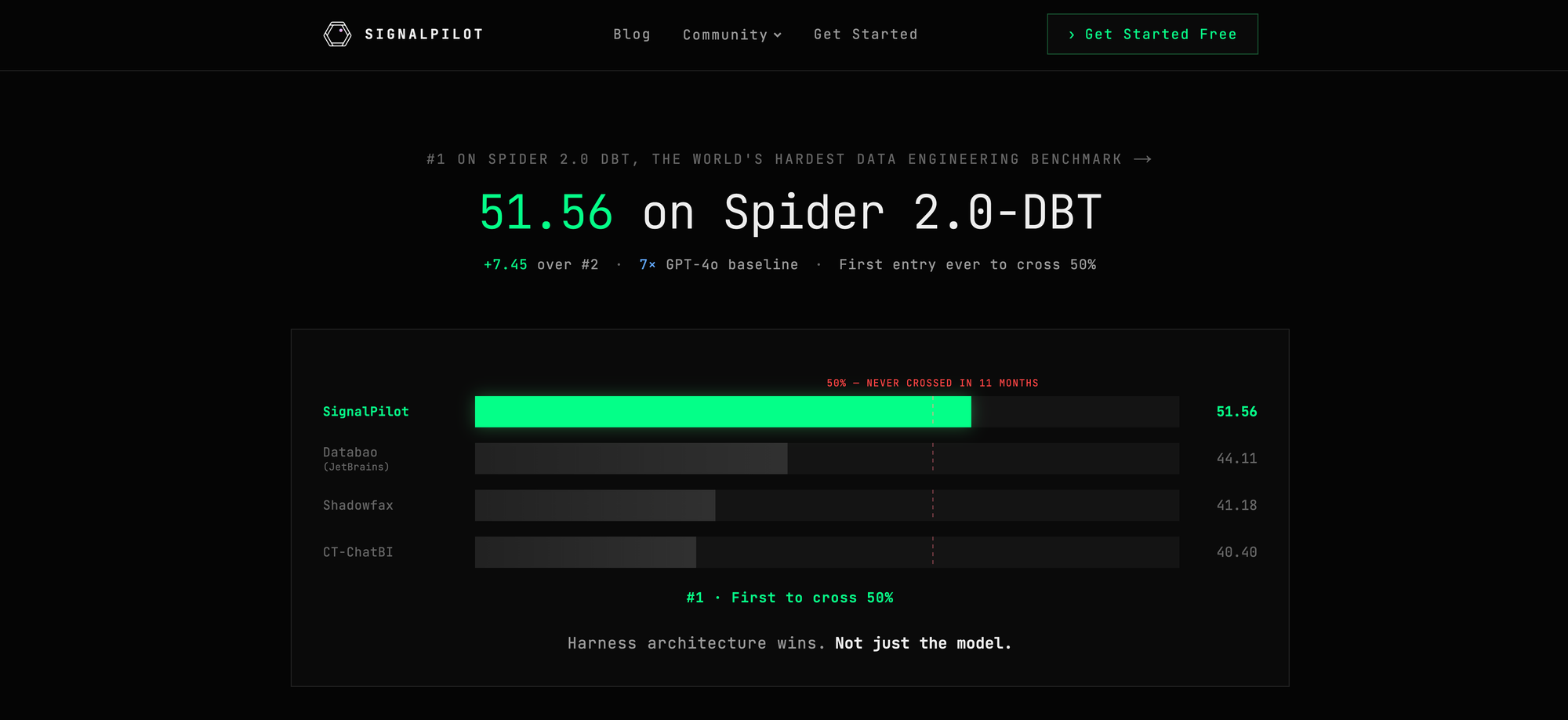

The Numbers from Our Build

| Metric | Value |

|---|---|

| Schema discovery to full understanding | 2 MCP calls |

| Governed SQL queries for profiling | 10 |

| Total dbt models built | 16 |

| Tests passing | 25/25 |

| Every query audited with agent attribution | Yes |

| Automatic LIMIT injection on all queries | Yes |

Setting It Up

1. Configure SignalPilot MCP in Your Coding Agent

Note: SignalPilot works with any MCP-compatible client — Claude Code, Cursor, Windsurf, Codex, Cline, or any agent that speaks the protocol.

claude mcp add --transport http signalpilot https://gateway.signalpilot.ai/mcp

2. Connect Your Database

Register your database connection in SignalPilot. Postgres, Snowflake, BigQuery, Redshift, ClickHouse, and DuckDB are supported.

3. Start Building

Tell your coding agent what you want to build. It will use SignalPilot's MCP tools to explore your schema, profile your data, and build dbt models — all governed.

"I want to build a dbt project against the SignalPilot-Ops database.

Start by exploring the schema and building staging models."

The combination of AI code generation and governed database access isn't just convenient — it's the only safe way to let AI agents build analytics infrastructure against real databases.

Built with Claude Code + SignalPilot MCP.